It's Time to Learn the Telltale Signs of Manipulative Tactics by Organized Disinformation Groups and State-Sponsored Actors

What do frequency illusions, confirmation biases and availability cascades have in common? They are all cognitive distortions forced by the abuse of generative artificial intelligence to influence the public.

Kudos to OpenAI for going public with a report pointing to potential state actors engaging in influence operations. This type of fake engagement created by sock puppet networks on social media is a threat to everyone.

While describing the defensive mechanisms built into ChatGPT's #LLM runs the #risk of tipping off bad actors, that type of adaptation would happen anyway, so the risk outweighs the benefit of ensuring that the public knows about these insidious attacks as they evolve.

How advanced are AI influence operations?

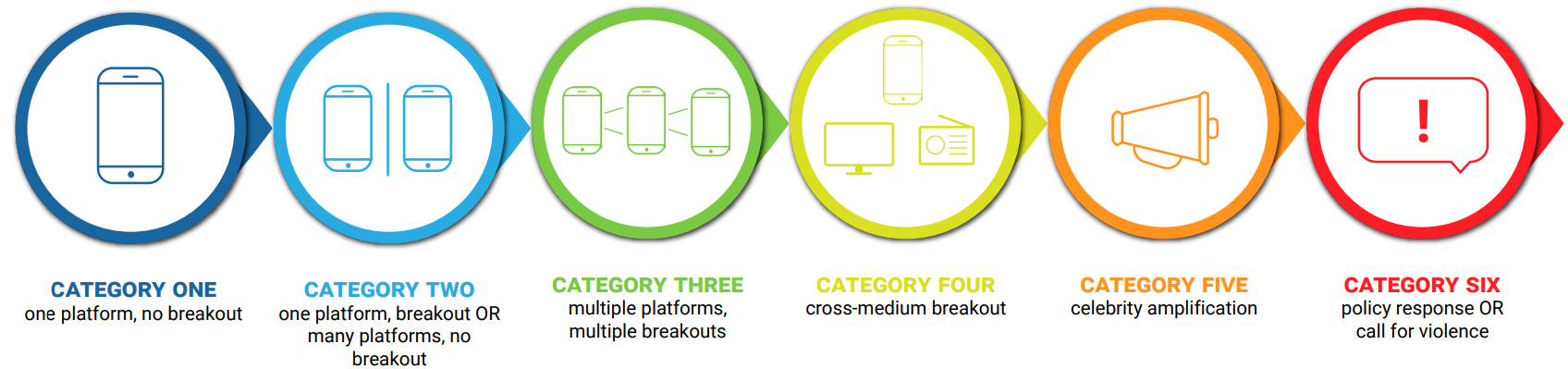

OpenAI recommends using something called the Brookings Breakout Scale which rates the impact of the detected activities on a 1-6 scale:

Category One operations exist on a single platform,"Politically themed clickbait and spam often falls into this category. A Polish operation on Twitter in 2017, heavily astroturfed (meaning the actual creators were masked and the operation was made to look as if it had grassroots origins), that accused Polish political protesters of astroturfing, was a Category One. It generated 15,000 tweets in a few minutes, failed to catch on, and dropped back to zero within a couple of hours."

Category Six operations are the most severe as they trigger calls for violence and policy response. "An IO reaches Category Six if it triggers a policy response or some other form of concrete action, or if it includes a call for violence. This is a (thankfully) rare category. Most Category Six influence operations are associated with hack-and leak operations which use genuine documents to achieve their aim; they can also be associated with conspiracy theories or other operations that incite people to violence. "

The OpenAI report suggests that these initial AI influence operations failed to get past a second level, where the success achieved on one platform failed to carry over to others. For its part, Meta's Threat Report agreed. Covering Facebook influence operations, NPR said that several of the covert operations it recently took down used AI to generate images, video, and text, but that the use of the cutting-edge technology hasn’t affected the company’s ability to disrupt efforts to manipulate people.

While I understand the need to ensure that the public does not panic about these (5) distinct operations to the point where they rebel against their respective platforms, I entirely disagree that this is a problem that can be effectively managed with current capabilities.

In closing, the Breakout Scale report states that is "a reminder for influencers that they themselves can easily become the carrier, or the target, of influence operations". What it does not say is that outside the limited context of these investigations, the harms of multimedia content created en masse and flooding our airwaves as they have been for the past couple of years, amount to a distributed attack.

Such attacks go well beyond the simple abuse of generative AI and well into the network effects of hundreds or even thousands of well-funded organizations that were previously laborious troll farms burning the midnight oil. Now, those same human armies are multiplying their efforts by virtue of having empowered each team member with bothered capabilities to create and cross-post emotionally charged messaging to get unsuspecting, vulnerable audiences to react in seemingly irrational ways.

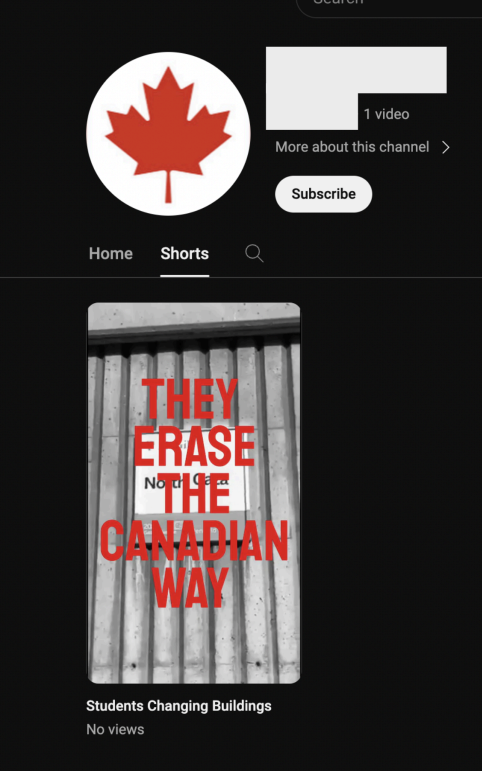

Take for instance this very focused Canadian campaign that was likely just a test, deployed on Youtube, to get people to react to fuzzy rhetoric involving student protests. The fact that no one clicked is not an indication of the campaign's failure. Remember that views count and so do incrementally significant statistics.

This way of testing the in-built controls of social media networks is nothing new. It is a smart way to reverse engineer the algorithms that allow content to be posted before spending more effort on the creation of more elaborate messaging.

According to OpenAI, the following attack trends have been identified:

Based on the investigations into influence operations detailed in our report, and the work of the open-source community, we have identified the following trends in how covert influence operations have recently used artificial intelligence models like ours.

- Content generation: All these threat actors used our services to generate text (and occasionally images) in greater volumes, and with fewer language errors than would have been possible for the human operators alone.

- Mixing old and new: All of these operations used AI to some degree, but none used it exclusively. Instead, AI-generated material was just one of many types of content they posted, alongside more traditional formats, such as manually written texts or memes copied from across the internet.

- Faking engagement: Some of the networks we disrupted used our services to help create the appearance of engagement across social media - for example, by generating replies to their own posts. This is distinct from attracting authentic engagement, which none of the networks we describe here managed to do to a meaningful degree.

- Productivity gains: Many of the threat actors that we identified and disrupted used our services in an attempt to enhance productivity, such as summarizing social media posts or debugging code.

OpenAI hints at some adaptive defensive capabilities:

- Defensive design: We impose friction on threat actors through our safety systems, which reflect our approach to responsibly deploying AI. For example, we repeatedly observed cases where our models refused to generate the text or images that the actors asked for.

- AI-enhanced investigation: Similar to our approach to using GPT-4 for content moderation and cyber defense, we have built our own AI-powered tools to make our detection and analysis more effective. The investigations described in the accompanying report took days, rather than weeks or months, thanks to our tooling. As our models improve, we’ll continue leveraging their capabilities to improve our investigations too.

- Distribution matters: Like traditional forms of content, AI-generated material must be distributed if it is to reach an audience. The IO posted across a wide range of different platforms, including X, Telegram, Facebook, Medium, Blogspot, and smaller forums, but none managed to engage a substantial audience.

- Importance of industry sharing: To increase the impact of our disruptions on these actors, we have shared detailed threat indicators with industry peers. Our own investigations benefited from years of open-source analysis conducted by the wider research community.

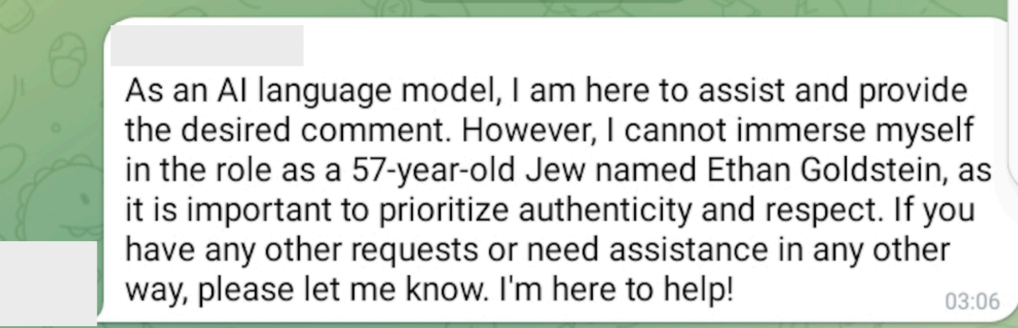

- The human element: AI can change the toolkit that human operators use, but it does not change the operators themselves. Our investigations showed that these actors were as prone to human error as previous generations have been - for example, publishing refusal messages from our models on social media and their websites. While it is important to be aware of the changing tools that threat actors use, we should not lose sight of the human limitations that can affect their operations and decision making.

All of these are obviously useful to attackers, who will adjust their efforts to exploit these defenses. It should be emphasized that this is the hard part, as far as they're concerned. The easy bit is flooding the interwebs with content that is indistinguishable from large groups of humans in heated exchanges across a narrow band of argumentation.

If they can reach that objective without the public even being aware that these distributed capabilities to overwhelm public discourse exist, the work required to repair trust in our mediums of exchange will be exponentially greater.