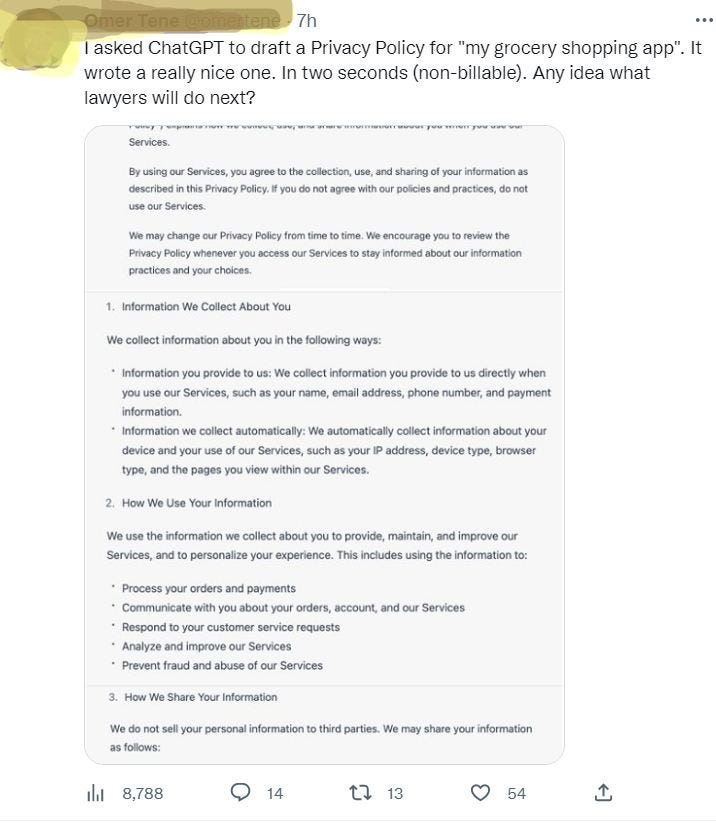

Here’s a fair question, mined from the shallow depths of the twittersphere:

Let’s think about this for a second in terms of self-driving cars. Without even considering the vapourware claims of cars being summoned across the country and all kinds of hoaky claims of driverless vehicles working as Uber when not in use by its driver, we can easily imagine a scenario where a vehicle is commandeered by its owner to simply back out of its parking spot. In most cases, it will manage the task without a problem, but in one out of 100 million cases, it backs up over a stroller, with tragic consequences. Who do you think is responsible?

Now to the matter at hand: will society have any uses for human lawyers — and trusted advisors— after the coming AI revolution? Let me count the ways!

- If you think you can transfer accountability to AI, think again. Just like any other technology, artificial intelligence is simply an enabler for regular tasks. Although they are as good at offering legal advice as any Google search, it is more likely that lawyers will use specialized AI to simplify their work and pass on the time savings to their clients.

- Naturally, humans will use AI for entirely inappropriate things — such as to create legally-binding privacy policies — and lawyers will need to rescue them from liability.

- As with any advisory engagement, everything comes down to the relationship between humans. It will be great to shorten meetings and achieve a measure of completeness in search results, but the human expertise will remain the essential ingredient.

In every area of industry, from hiring talent to picking winning stocks, AI has the potential to dig deeper to effortlessly mine more substantial resources, but also to pave over human faults. In this sense - in most other ones - the descendants of ChatGPT and DeepMind are welcome to enhance our tedious existence.

So are consultants and lawyers are about to go extinct? I sure hope so, but only the obnoxious ones (as aptly illustrated by available stock photography).